In 2026, AI is everywhere in Nigeria—from the bots handling customer complaints in Ikeja to the algorithms predicting crop yields in the North. However, with this power comes a new responsibility.

The Nigerian National AI Strategy, fully operational this year, emphasizes that AI must be “Human-Centric.” For your business, this means moving past “how can we use AI” to “how can we use AI without losing the trust of our people?”

1. Data Privacy and NDPR 2.0 Compliance

In 2026, the Nigeria Data Protection Regulation (NDPR) has been updated to specifically address AI training. You cannot simply dump your customers’ private data into a public AI model to “see what happens.”

- The Risk: Feeding sensitive customer phone numbers or transaction histories into public AI models is a major data breach. That data now lives in the “cloud” forever and could be leaked to competitors.

- The Ethical Move: Only use “Enterprise-Grade” AI tools that offer Data Siloing—meaning your data stays within your company and is never used to train the public model.

- The Protocol: Always obtain explicit consent if you are using a customer’s data to generate “Personalized AI” insights.

Technical Resource: Review theNigeria Data Protection Commission (NDPC)for the latest 2026 guidelines on AI-specific data processing.

2. Fighting “Hidden Bias” in the Nigerian Context

Most AI models are trained on Western data. In 2026, we’ve seen that this can lead to “Algorithmic Bias” in Nigeria. For example, an AI used for hiring might inadvertently favor certain accents or CV formats that don’t reflect the diversity of the Nigerian talent pool.

- The Ethical Move: “Stress-test” your AI. If you use AI to score loan applications or hire staff, manually check the results for a week. Does it consistently reject people from certain regions or backgrounds?

- The “Vibe Check”: Ensure your AI understands the “Nigerian context”—including local slang, Pidgin nuances, and cultural references—to avoid alienating your customers.

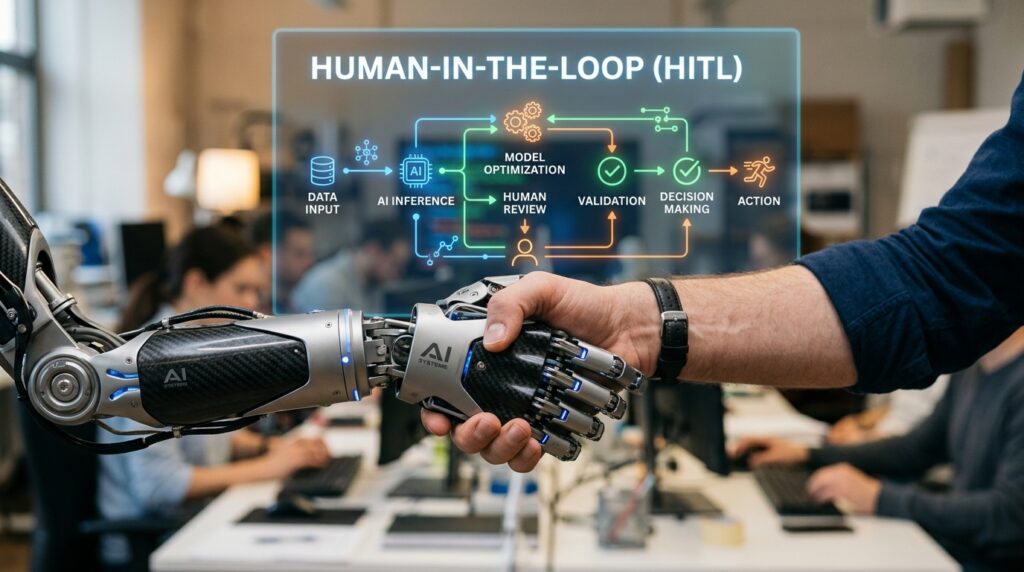

[IMAGE GENERATION PROMPT 3 – SECTION SEPARATOR]

Prompt: A close-up of a human hand and a robotic hand shaking. In the background, a transparent digital screen shows a complex flowchart labeled “HUMAN-IN-THE-LOOP.” The atmosphere is collaborative and trustworthy.

3. The “Human-in-the-Loop” Mandate

The most dangerous ethical mistake is “Automated Cruelty”—allowing an AI to make life-changing decisions for your customers or staff with no human oversight.

- The Protocol: For high-stakes decisions (like firing an employee, denying a large loan, or canceling a service), an AI should only provide a recommendation. A human must make the final “Yes” or “No” call.

- Transparency: If a customer is talking to a bot, tell them. In 2026, “bot masquerading” as a human is considered a major ethical (and potentially legal) violation in Nigeria’s digital space.

The 2026 Responsible AI Checklist

To align with this week’s theme of Action, run your business through this 5-point ethical audit:

| Ethical Pillar | Question to Ask | Action |

| Transparency | Does the user know they are talking to an AI? | Add a “Powered by AI” badge to bots. |

| Privacy | Is our customer data being used to train public AI? | Switch to “Private Instance” or Enterprise tools. |

| Fairness | Does the AI favor one tribe, gender, or age group? | Run a monthly “Bias Audit” on AI outputs. |

| Accountability | Who is responsible if the AI makes a mistake? | Appoint a “Chief AI Ethics Officer” (or designate a lead). |

| Safety | Can our AI be “prompt-injected” to leak secrets? | Conduct a “Red-Team” test on your AI interfaces. |

The Takeaway: Ethics as a Competitive Advantage

In Nigeria, where trust is the primary currency of business, being “The Ethical Choice” is a massive advantage. Customers in 2026 are tech-savvy; they will flock to businesses that respect their privacy and treat them fairly.

Responsible AI isn’t just a hurdle—it’s the foundation for a business that scales with integrity.